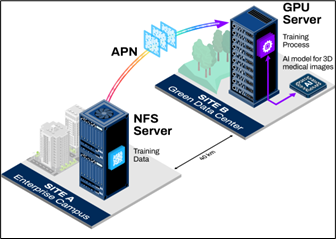

Figure 1. PoC architecture with storage at Site A and GPU compute at Site B over a 40 km APN link.

As demand for artificial intelligence (AI) grows, the ability to distribute Graphics Processing Unit (GPU) resources across distance is more than a technical breakthrough; it is a competitive advantage. An All-Photonics Network (APN) enables data centers to operate across significant distances with performance that is nearly identical to local processing. This minimal difference provides enterprises with valuable flexibility in where GPU infrastructure can be placed.

The IOWN Global Forum’s Proof of Concept (PoC) completed AI training for 3D medical image recognition using a common model (UNet3D) across a 40 km APN link. This demonstrates how APN allows GPU servers and data storage to function as if they were co‑located, even when physically separated, which opens new options for how organizations design AI infrastructure.

Challenges of Traditional Architecture

Artificial intelligence workloads demand large, power-intensive resources. With widespread AI adoption globally, one major challenge is thatAI training consumes considerable power, increasing both operating costs and environmental impact. Current architecture forces trade-offs, as enterprises typically face two unsatisfactory options: GPU-as-a-Service (GPUaaS) or private GPU infrastructure on premises.

The first option, GPUaaS, provides several advantages. In this scenario, the GPU infrastructure is operated in rural areas with space for renewable energy. It helps avoid the major cost and staffing burdens of owning GPU infrastructure. Shared access improves utilization of expensive hardware, offloads maintenance and specialized engineering needs, and protects organizations from rapid hardware obsolescence and repeated capital upgrades. However, despite these benefits, there is a large disadvantage: GPUaaS requires uploading confidential data to a third party.

The second option, on-premises private GPU infrastructure, offers the benefit of full control of data. In other words, there is no risk of data leakage. Despite that advantage, there are several drawbacks. Private GPU infrastructure is very expensive to build and maintain. It requires specialized staff, consumes large amounts of electricity, and is usually located in urban regions with a higher carbon impact.

The Solution: What APN Enables

An APN makes it possible to place GPU servers and storage in different locations while still delivering performance that feels nearly local. The network’s ability to provide high-bandwidth and low-latency connections allows large AI training datasets to move efficiently, and these capabilities make remote GPU resources function as if they were located on site, even when deployed at significant distances.

A Realistic Deployment Scenario for APN-Enabled GPU Workloads

Although an APN can be deployed in many configurations, the following scenario helps illustrate how it works in practice. In this example, an organization maintains its headquarters or R&D center in an urban location while operating GPU servers in a rural facility powered by renewable energy. With this setup, sensitive data remains inside enterprise-controlled environments, which allows the organization to capture sustainability and cost advantages without creating exposure to data-leakage risks.

The model supports flexibility in how the remote GPU site is operated. The compute facility may be operated by a third-party GPUaaS provider or by the enterprise itself. In both cases, the enterprise can leverage remote GPU resources without placing confidential data at the remote GPU site. This approach allows enterprises to achieve significant sustainability and cost benefits, while retaining full control over their confidential data.

Business Benefits of Running GPU Workloads Over an All-Photonics Network

An All‑Photonics Network makes it possible to use remote GPU resources while keeping sensitive data within an organization’s own environment. This delivers the convenience of GPUaaS without the risk of uploading confidential information to a third party. It also supports carbon‑neutral computing by allowing workloads to run in locations that use renewable energy.

An APN also helps reduce overall IT costs. Organizations can avoid large capital investments because GPUaaS improves utilization through shared resources rather than dedicated hardware that often sits idle. Operational spending is lower as well because the service provider manages maintenance, driver updates, performance tuning, and recovery tasks that would otherwise require specialized engineering support. Photonics-based infrastructure enables organizations to use remote GPUaaS, and because the provider manages hardware refresh cycles, organizations avoid frequent GPU replacements and always have access to up-to-date performance. Together, these elements reduce both capital and operating costs (Capital Expenditure, or CapEx, and Operating Expenditure, OpEx) while improving flexibility.

Real estate costs can also decrease. Because APN preserves performance across distance, GPU data centers can be located outside urban hubs where land and facilities are less expensive. This gives organizations more freedom to place infrastructure where it is most cost effective without compromising workload performance.

Proof of Concept Setup

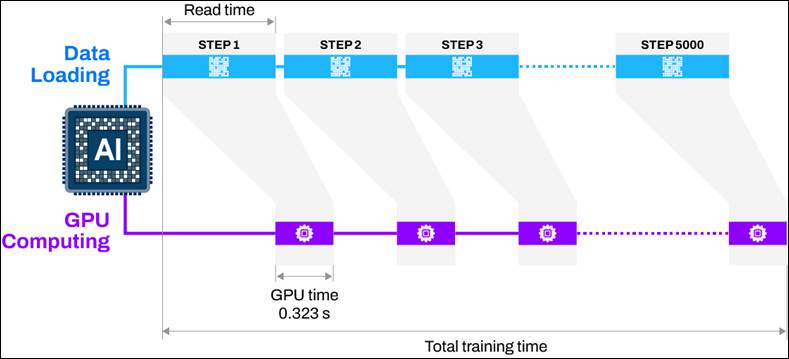

Figure 2. Training workflow showing parallel data loading and GPU computation in 56‑image batches across 5,000 steps.

The PoC tested AI training using a model for 3D image recognition. The team ran the experiment across two data centers located 40 kilometers apart. Storage remained at Site A while the GPU server operated at Site B, and the two locations were connected through APN optical paths.

The dataset contained 28,000 images and totaled 3.8 terabytes. Following standard AI training practice, the system loaded batches of data from the remote storage site and performed GPU computation in parallel. Each batch consisted of 56 images, and the full training process was divided into 5,000 steps.

Performance Results

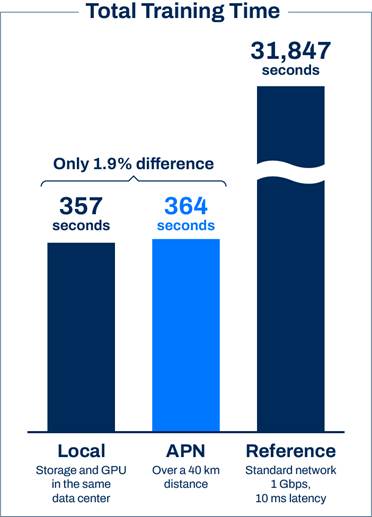

Figure 3. Training time comparison: APN over 40 km (364 s) is 1.9% slower than local (357 s), while a standard 1 Gbps/10 ms link is far slower (31,847 s).

The PoC measured how long it took to complete one full training cycle using the entire dataset (one “epoch”). With local storage and a local GPU, the training required 357 seconds. This configuration delivers strong performance but is often impractical because of space, power, and cost constraints in urban areas.

For comparison, the training process was evaluated under two additional configurations:

- A co-located GPU and storage system, and

- A reference point-to-point link with 1 Gbps bandwidth and 10 ms round-trip latency intended to emulate a typical internet connection.

Under these traditional internet-like conditions, one epoch required 31,847 seconds, which is far too slow for practical AI workloads.

While the training using the local GPU took 357 seconds, running the same remote training over APN across the same 40‑kilometer distance took 364 seconds. This was only 1.9 percent slower than local processing, demonstrating that an APN maintains performance close to local operation even when compute and storage are separated.

These findings indicate that APN makes it viable to place GPU infrastructure in remote or lower-cost facilities without sacrificing training speed. This creates a practical path to more sustainable and cost‑effective AI operations. With this setup, enterprises can run demanding workloads in remote data centers that support carbon‑neutral strategies while still achieving performance suitable for production‑grade AI applications.

Conclusion

This PoC demonstrates the business impact of the flexibility that an All‑Photonics Network provides. APN allows organizations to place GPU servers in locations where costs are lower, which improves overall cost efficiency. It also supports sustainability goals because GPU resources can be located where cleaner energy is available. Throughout the process, sensitive data remains within environments controlled by the enterprise, which strengthens security and reduces risk.

This work represents an early step. In other use cases, experiments have already been conducted across longer distances, including 200 kilometers and even up to 1,000 kilometers, and those tests have confirmed data access throughput at scale. These results show the potential for APN to support a wide range of AI workloads across large geographic areas.

To learn more, join us at upcoming industry events to discover how photonics-based infrastructure can unlock new opportunities and enable sustainable growth at scale.